AI coding assistants have become essential to modern software development, but cloud-based tools create risks for small and medium businesses (SMBs). These risks include exposing source code, increasing operational costs, and complicating compliance. An on-prem AI Coding Server provides the speed and productivity of cloud AI tools while keeping all source code, training data, and fine-tuning models securely inside a company’s environment. With predictable costs, air-gapped deployment, and a developer-friendly UX, software teams can write AI code safely and confidently.

Why AI Coding Tools Are Becoming Essential

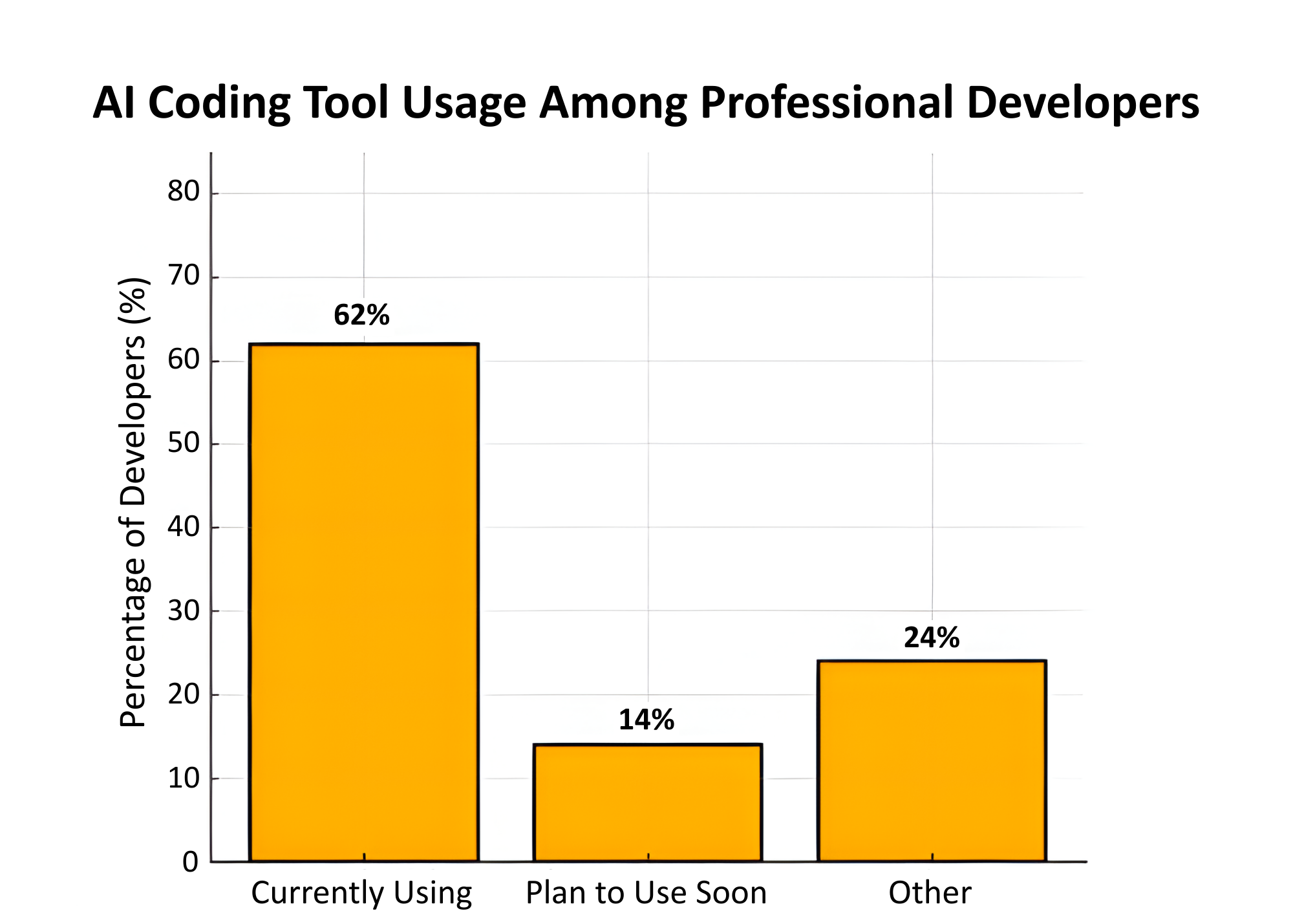

It’s no secret that software teams are becoming increasingly reliant on AI coding assistants. In fact, according to SecondTalent, 41% of all code in 2025 is AI generated or AI assisted and 76% of professional developers either use AI coding tools or are planning to adopt them soon. These AI coding assistants are popular for good reasons. They dramatically increase software development speed, improve accuracy, and free engineers from having to do repetitive tasks. For example, one study found that developers using GitHub Copilot completed tasks up to 55% faster. As reliance on these AI tools grows, companies will require air-gapped coding servers capable of running generative AI models with a minimum 20 billion parameters.

While the main cloud-based coding large language models (LLMs) that exist today (like Anthropic’s Claude) are capable of sophisticated code generation, Unigen is charting a different path. Unigen is building a new generation of multi-module servers using AI modules with inference silicon that can provide efficient performance in a low-power, economical, Open Compute Platform (OCP) format. These systems can support generative AI LLMs, such as OpenAI’s GPT-OSS-20B.

The Issue with Cloud-Based Coding

There are several issues corporations must consider before allowing their software teams to use the Cloud for their coding.

Security

Every line of code is intellectual property (IP). Letting this code outside of a company’s shared cloud environment can directly expose a company’s deepest secrets to a host of others who may not have the best intentions.

In fact, even major providers have stumbled. In 2024, Microsoft Copilot inadvertently exposed private GitHub repositories from 16,000+ organizations, leaking over 300 credentials and 100 internal software packages through Bing’s caching system.

IP Contamination

When generative models are trained on or interact with mixed datasets, there is a risk of the company’s data being linked to open sources with unclear licenses. This can lead companies to be exposed by using open source without a clean title to the IP.

According to TechTarget, “coding assistants might generate large chunks of licensed open source code verbatim, which leads to IP contamination in the new codebase.”

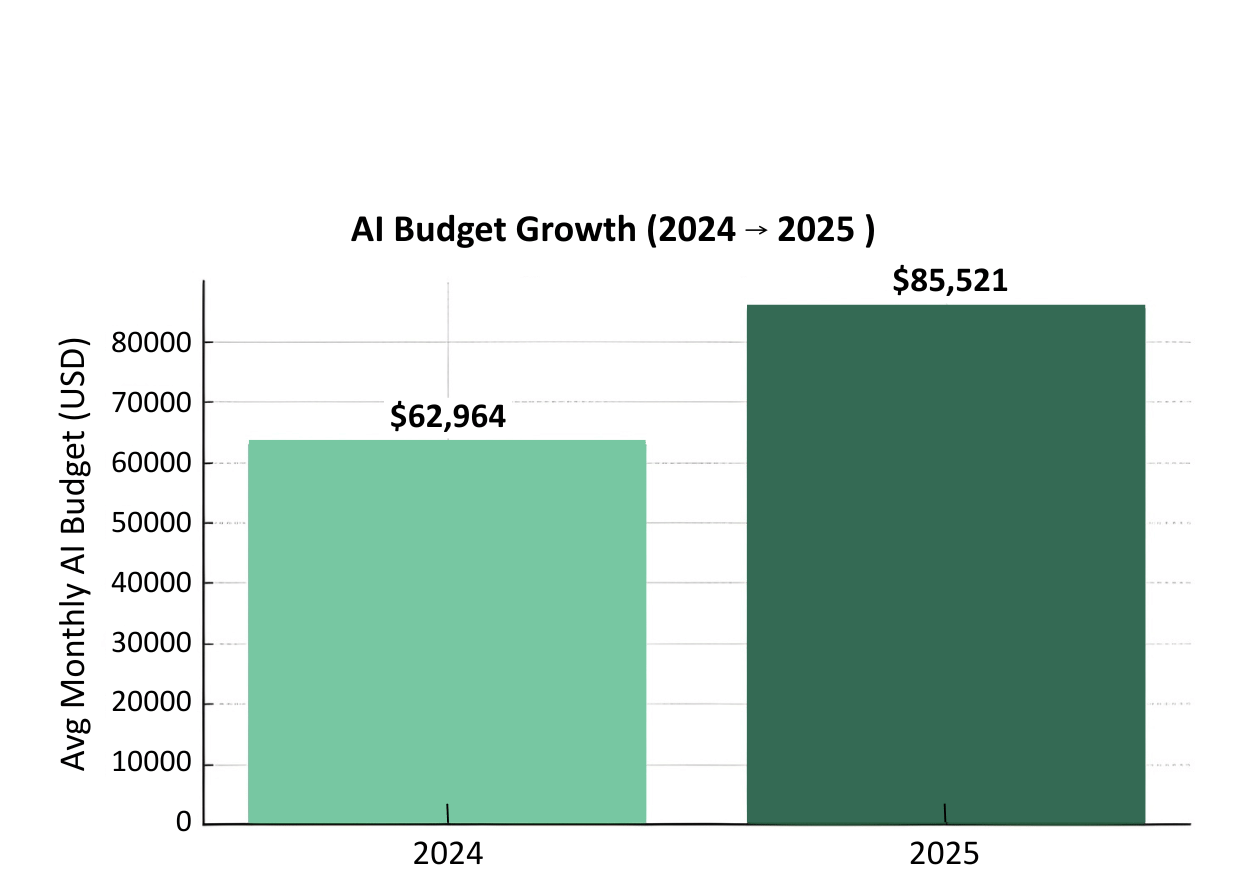

Expanding Costs

The cost of using cloud AI computing resources is continuing to grow without any bounds or limits. On top of that, many teams find out that their production code costs much more than they were expecting prior to the completion of a critical project.

According to Ficus Technologies, in 2025, companies spent $30,000 to $80,000 per year just to keep models running at scale. Additionally, there are hidden costs like MLOps operations and model retraining.

Solution: A Secure On-Prem Alternative

One solution to these issues is to contain the AI coding to an air-gapped, on-prem server. This solution protects source code and IP from being intercepted by bad actors. Additionally, an on-prem server can have its code managed and contained to prevent it from using any other data or Gen AI solutions except those that are managed by the corporation and its IT experts. Finally, through a wholly owned or leased server, the costs are well understood at the outset, eliminating surprise token spikes or compute overage charges. The result is a cloud-quality AI coding platform that delivers security, control, and financial clarity.

How the Unigen AI Coding Server Works

IT Setup and Configuration of AI Coding Server

Deployment begins with standard enterprise hardware procedures. IT teams unpack and mount the server hardware, then install the server and connect it to the local private network according to organizational standards. The configuration of network security follows established enterprise practices, including the implementation of firewall rules, VLAN segmentation, and port allow listing to ensure the server operates within defined security boundaries. The system arrives with a pre-installed operating system and software stack designed to simplify diagnostics and streamline the AI coding environment installation process.

The server integrates directly with your organization’s identity management infrastructure, supporting Active Directory, Lightweight Directory Access Protocol (LDAP), and single sign-on (SSO) solutions to maintain consistent authentication protocols across your environment. IT administrators establish resource allocation policies that define parameters such as concurrent user limits and token consumption thresholds, ensuring optimal performance and fair resource distribution. Access privileges are granted based on specific project requirements, allowing granular control over who can utilize the AI coding capabilities. Security policies and data governance rules are configured to align with organizational compliance requirements, while code repository access and permissions are established to control how the AI interacts with existing codebases. Following configurations, IT performs connectivity testing and initial validation to ensure the system is ready for production use.

User Experience

The developer experience is designed to be intuitive and familiar, requiring minimal training or adjustment to existing workflows.

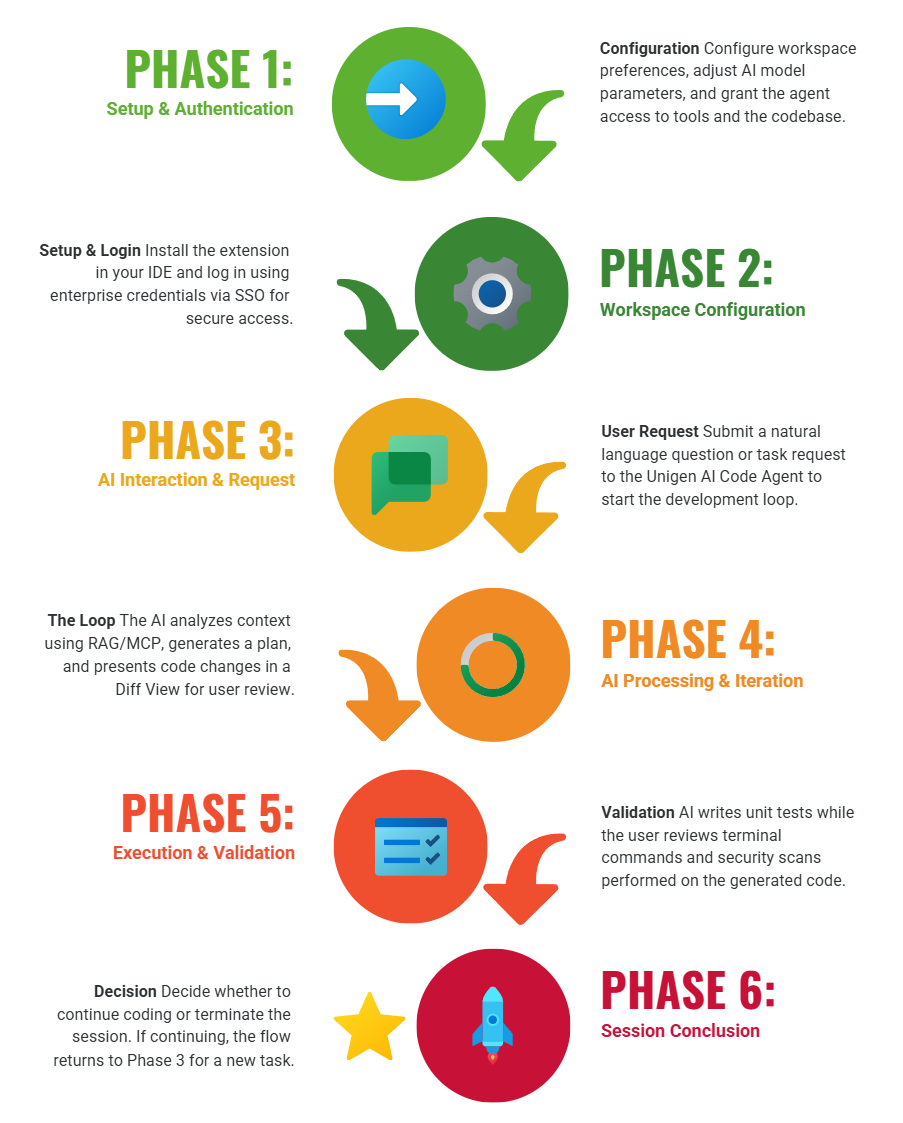

Onboarding

Users begin by installing a lightweight extension on their preferred integrated development environment on their local client machine. Authentication occurs through a login process using credentials provided by IT for the AI Coding Server, leveraging either SSO or standard enterprise credentials to maintain security consistency. Once authenticated, developers interact with the Coding Agent through a chat interface reminiscent of ChatGPT or Cursor, positioned conveniently within their integrated development environment (IDE) workspace.

AI Coding Configuration

Developers configure their workspace preferences and model parameters according to their specific needs and coding style. The system requires explicit permission grants for accessing the tools and code involved in the current workflow, including file system access, Git repository interaction, and terminal command execution. This permission model ensures transparency and maintains security boundaries while enabling the AI to function effectively.

Code Generation Planning

When a developer poses a question or task to the Unigen AI Code Agent, the system demonstrates its capabilities primarily in Python, TypeScript, and JavaScript. The AI analyzes the existing codebase context and tools to understand the project structure and conventions. It then creates a comprehensive plan of action outlining the optimal approach to accomplish the requested task.

Human Review

Before any changes are applied, developers can preview proposed modifications in a side by side view, allowing careful review of what the AI intends to change. Developers maintain complete control, approving changes or requesting adjustments through additional prompts to refine the output.

Built-In Testing and Automation

The system automatically generates unit test cases to verify code correctness and functionality, but all code execution requires explicit user approval at every step. This human-in-the-loop approach ensures developers maintain oversight throughout the coding process. Generated code undergoes security scanning, with results presented for review before integration into the codebase. This iterative process of requesting, reviewing, and refining continues as needed, creating a collaborative relationship between developer and AI agent.

Benefits of Unigen AI Coding Server

Unigen’s AI Coding Server offers a set of advantages designed with SMB software engineering teams in mind.

The system continuously learns from approved improvements, enabling your company to build proprietary fine-tuned AI coding agents over time. To further enrich this knowledge base, the platform supports the integration of code from pre-approved outside sources and libraries, allowing you to leverage industry-standard frameworks within your secure environment. This means your organization’s workflows, coding standards, and architectural preferences become part of your internal IP and aren’t shared with outside vendors or cloud models. Because the entire platform runs on-prem and is fully air-gapped, source code never leaves your environment, eliminating IP exposure and compliance risk.

Cost predictability is another key benefit. Unlike cloud-based AI tools with unpredictable token usage or abrupt pricing changes, Unigen provides a stable cost structure whether you lease or purchase the hardware. Development performance matches popular cloud tools such as Cursor, Windsurf, and Kiro, but with unlimited tokens and no API errors caused by network or rate-limit issues.

The platform significantly improves engineering efficiency, saving both senior and junior developers 10+ hours per week through automated unit testing, documentation, and CI/CD assistance. Productivity increases of 26% – 40% are common, contributing to a strong return on investment, often estimated at 30x or more. If a customer wants to expand their capabilities, they can add additional coding servers at a fixed cost or upgrade their AI modules when higher performance solutions are introduced. Modules are hot-swappable so there is no downtime during upgrade.

Finally, companies can customize the system’s AI agents or deploy specialized LLMs up to 20B parameters, enabling tailored workflows for unique software development needs.

Conclusion

The use of AI coding assistants is expected to grow significantly as the technology matures, but security, IP control, and cost stability must be prioritized. Unigen’s AI Coding Server gives companies a secure, private, and financially stable way to adopt state-of-the-art AI coding capabilities without sacrificing performance or developer experience. By bringing AI safely in-house, companies can accelerate software development, safeguard their IP, and keep costs low and predictable.

About Unigen’s Secure AI Coding Server: Poundcake-LLM

AI Capabilities

- Up to 20B-parameter Generative AI (LLM/VLM)

- 240 tokens/sec with 16 Unigen Tiramisu modules

Technology

- AIC EB202-CP Chassis, Motherboard, 2 x E3.S Boxes, Dual Power Supply

- AMD Genoa CPU with 16-48 Cores and AVX Media Decoding

- 8 – 16 Unigen E3.S Tiramisu AI Modules (up to 32 EdgeCortix SAKURA-II Processors)

- 256GB DDR5 Unigen RDIMMs

- 960GB Boot Drive (Data Drives Available)

- 2 x 1.92TB E1.S Unigen Data Drives

- 25GbE Networking

- Less than 1200 Watts total power consumption

- Ubuntu 22.04 Operating System

About Unigen Corporation

Founded in 1991, Unigen is an established global leader in the design and manufacture of OEM products including SSDs, DRAM modules, NVDIMMs, Enterprise IO, and AI solutions. Unigen also offers a full array of Electronics Manufacturing Services (EMS), including design, quick-turn prototyping, new product introduction, volume production, supply chain management, assembly & test, and aftermarket services. Headquartered in Newark, California, the company operates state-of-the-art manufacturing facilities (ISO-9001/14001/13485 and IATF 16949) in the heart of Silicon Valley as well as offshore in Vietnam and Malaysia. Unigen offers its products and services to customers worldwide targeting a broad range of end markets including automotive, computing and storage, embedded, medical, AI, robotics, clean energy, defense, aerospace, and IoT. Learn more about Unigen’s products and services at unigen.com.

Glossary

- Air-Gapped: A security measure in which a computer, network, or system is physically isolated from unsecured or public networks (such as the internet). This separation reduces the risk of unauthorized access, data leakage, or cyberattacks.

- ChatGPT: An AI-powered language model developed by OpenAI that can understand and generate human-like text. It is used for tasks such as drafting content, answering questions, generating code, and assisting with research.

- Cursor: An AI-enhanced code editor that predicts your next edit, answers questions about your codebase, and writes or modifies code using natural-language prompts.

- Generative AI (GenAI): a type of artificial intelligence designed to create new content such as text, images, music or even code by learning patterns from existing data.

- Git Repository: A version-controlled storage location that contains a project’s files as well as the complete history of changes. It enables collaborative development, tracking of modifications, branching, and rollback to previous versions.

- Integrated Development Environment (IDE): A software that combines commonly used developer tools into a compact GUI (graphical user interface) application. It is a combination of tools like a code editor, code compiler, and code debugger with an integrated terminal.

- Intellectual Property (IP): Creations of the mind, including inventions, literary and artistic works, designs, symbols, names, and images used in commerce. IP is protected by law (e.g., patents, copyrights, trademarks) to provide recognition and financial benefit to creators.

- JavaScript: A dynamic, high-level programming language commonly used to build interactive and responsive features on websites and web applications.

- Kiro: AWS’s AI-powered Integrated Development Environment (IDE). Unlike tools such as Cursor or Windsurf, Kiro is specification-driven: it converts prompts into requirements, designs, and validated code.

- Large Language Models (LLMs): Advanced machine-learning models trained on vast amounts of text data to understand, generate, and manipulate natural language. They support tasks such as summarization, reasoning, coding, translation, and conversational interaction.

- Lightweight Directory Access Protocol (LDAP): A protocol used to access and manage directory information over a network. It provides a lightweight alternative to the X.500 directory service, enabling centralized authentication, user management, and resource lookup.

- On-Premises (On-Prem): Software, hardware, or infrastructure that is installed and operated within an organization’s physical location, offering increased control over data, privacy, and customization compared to cloud-hosted solutions.

- Open Compute Project (OCP): An open-source initiative that develops and shares designs for energy-efficient, scalable data center hardware. OCP promotes innovation and cost savings in server, storage, and networking infrastructure.

- Python: A high-level, general-purpose programming language known for its readability, simplicity, and extensive ecosystem. It is widely used in fields such as web development, automation, data science, and AI.

- Single Sign-On (SSO): An authentication method that enables users to log in once and gain access to multiple systems or applications without re-entering credentials.

- TypeScript: A typed superset of JavaScript that adds static type checking and modern language features. It compiles to JavaScript and improves maintainability and reliability in large codebases.

- User Experience (UX): The overall quality of a user’s interaction with a product, system, or service, including ease of use, accessibility, efficiency, and satisfaction.

- VLAN Segmentation: A network design technique that divides a physical network into multiple virtual local area networks (VLANs). This enhances performance, improves security, and isolates traffic between groups of devices.

- Windsurf: A company that provides an AI-powered code editor designed to help developers write, understand, and modify code more efficiently through intelligent automation.